> From the WeatherWatch archives

On a gloomy, wet, day inside a quiet office in Lower Hutt, a handful of people go through data on their computers to produce something tens of thousands if not hundreds of thousands of us have grown to rely on almost every day – the size and depth of the latest earthquakes from around Christchurch and NZ.

This is the office of GeoNet – a brand that was hardly a household name just 9 months ago but now it’s one that many of us hear mentioned every day. For those of us who use social media, especially Twitter, the GeoNet logo has become as common as the Twitter logo itself.

A couple of weeks ago I flew to Wellington to meet with Kevin Fenaughty, GeoNet Data Centre Manager to discuss how GeoNet has stood up to their first real test in over 20 years.

A couple of weeks ago I flew to Wellington to meet with Kevin Fenaughty, GeoNet Data Centre Manager to discuss how GeoNet has stood up to their first real test in over 20 years.

The last big damaging quake in New Zealand was the Edgecumbe earthquake on March 2 1987 (also a 6.3 quake). Of course back then we didn’t have the internet let alone Twitter and Facebook. Many of us didn’t even own a computer. And Edgecumbe, despite being badly damaged, was only a small town and simply doesn’t compare to what has happened in Christchurch with so much death and destruction.

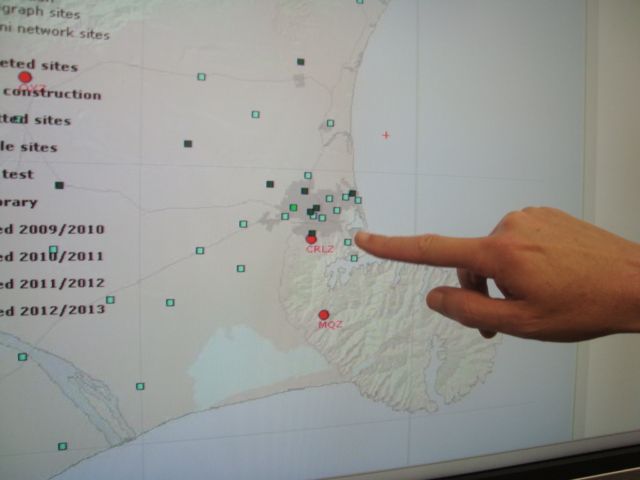

Image / Kevin Fenaughty points out the most recent earthquakes

In fact while the magnitude rating of the February 22 Christchurch earthquake wasn’t extremely high, the force, or energy, that came from it was simply incredible – scientists say it may have been one of the most powerful earthquakes every recorded… on the entire planet.

The ground acceleration from this earthquake was two times the speed of gravity, whereas the earthquake last September was the same as gravity. This is a phenomenal statistic and partially explains why so buildings collapsed.

But going back to the magnitude 7.1 quake which rocked Canterbury at 4:35am on Saturday September 4, 2010, – when this hit no one at GeoNet actually knew if their technology was ready to handle an explosion of web traffic – let alone how their staff would handle not one, but two massive earthquakes over the next 6 months.

“The internet has played right into our hands…from the start we put it all on the net” says Mr Fenaughty referring to their decision well over 10 years ago to put as much information up on their website – www.Geonet.org.nz.

This way the public could be educated on the quake while GeoNet’s resources went into research, study and publishing the data. “We wanted to put every big earthquake and every earthquake that was felt on our website for people to see”.

So how did their website cope with such a huge volume of traffic? – surprisingly, everything went without a hitch. “Our site stood up to both the September and February quakes” he said. To me this is remarkable – a website that has never truly been tested coped with the world’s attention. There are other websites that cover weather or natural disaster emergencies here in New Zealand that fall over frequently – and they know their peak numbers.

Up until September 2010 if people felt an earthquake they would often contact Police or Civil Defence. More of us now know that we can access this quake data in a far more efficient manner via GeoNet, in near real time, when we feel, or hear about, an earthquake.

So, in this day and age – why is it near real time and not actually real time? “Every earthquake that goes up on our site, and therefore Facebook and Twitter too, has to be manually checked by one of our scientists” says Mr Fenaughty. But why? GeoNet doesn’t get too many complaints but one of the common ones is why do they “hide” some information. What aren’t they telling us?! “We have 15,000 earthquakes every year, there is no point in having a big list of many of those quakes aren’t even felt” says Mr Fenaughty.

Not only that but just like weather computer models the computers and seismographs can only tell you so much.

Data Centre Technician Jennifer Coppola explained why that was. She says when a quake occurs, a number of seismographs (the number depends on the magnitude of the earthquake) pick up the motion. “Data that all our seismographs record are continuously being transmitted to our computer hub via satellite link, but to “trigger” an earthquake, certain configurations of stations and magnitudes need to occur. Of these triggered events, duty officers are alerted to the larger earthquakes right away, when certain thresholds are exceeded. They publish the results to our website”.

Usually a week or so later, analysts review all triggered events, including the ones for which the duty officers have already derived a preliminary solution.

Usually a week or so later, analysts review all triggered events, including the ones for which the duty officers have already derived a preliminary solution.

Once all the data are in it’s Ms Coppola’s job – and others – to more accurately compute the quake’s size and depth. The computers are bang on in some areas, not so flash in others. Humans are still needed. Humans are also needed to work out if that blip was an earthquake or just a truck rumbling past a seismograph. If that truck caused a spike on a graph and it was then instantly displayed online the public may panic – or worse still, loose faith in the data coming out of GeoNet.

With so many aftershocks Ms Coppola and the team at GeoNet are only now just seeing the light, from several weeks of checking and double checking all of the earthquakes. It’s this extra work that meant some of the sizes of quakes get revised days or even weeks later – as we saw recently in Japan when the USGS said it was 8.9, then raised it to 9.0.

GeoNet is not staffed 24/7. In fact there’s a team of seven or eight duty officers who, over the past several months, have had similar experiences to parents of a newborn baby with many restless nights.

When an earthquake strikes at two in the morning the computers work out two things – 1) How big was it? 2) Are people likely to have felt it? If yes to both questions the computer sends an urgent message to the pager of the duty officer currently on the roster. This duty officer wakes up, turns on their laptop and goes through the fairly short process of double checking if the computer was right.

Once a scientist has verified it, it is posted to the internet and social media websites. So every update you see has been verified by a human being. From the very shallow 2.5 quake in Christchurch to the big 6.8 quake 300kms deep under the earth’s surface. They check them all.

With the hundreds and hundreds of aftershocks since Sept 4 and again since Feb 22 this fairly small team has had many restless nights.

During the immediate days and weeks following both of these powerful quakes it was normal to be woken four or five times a night – and every time that pager goes off they must turn on the computer, check the data, verify it, then upload to the website. Broken sleeps are a way of life for the GeoNet team these days, although with things starting to slowly quiet down hopefully their sleeps – along with the residents of Christchurch – are getting longer and less interrupted . This team is acutely aware of the many thousands of people who now rely on them every day. Certainty, of any kind, is so important in these uncertain times.

On the GeoNet website people are asked to fill out a form when they’ve felt a quake. I was curious as to who reads all those reports. Again, it’s the computers. Those reports go into a specialised computer database and using an algorithm they are converted into intensity values of how that quake was felt and where it was felt, then that is displayed on the website.

It’s not just the public that have found GeoNet so helpful over these past several months. Those in the media that I’ve spoken to have high praise for them too. Not only are GeoNet working the long hours and giving many of us comfort at any hour of the day when the ground has moved, but they ‘get it’ when it comes to dealing with the public. Everything is freely available to the public. It is for the good of the people.

They enjoy speaking to the media too and found it particularly distressing when the Ken Ring saga emerged and for no factual reason the scientific community was painted as “evil” by a number of New Zealanders who jumped in to defend an old man being yelled at by John Campbell.

Whenever I hear people saying “don’t trust the scientific community” I can’t help but think we’re back in the days of burning witches and the earth is flat.

From my point of view the Ring drama was a media creation – and then the media turned on him. Ring’s a nice fellow but didn’t help his cause by flip flopping and denying his own actual quotes.

As I said before during uncertain times we climb to any certainty – even if that certainty is likely to be false. Anything is better than nothing.

But GeoNet has forecast the aftershocks – and with high accuracy. They are the only ones with a consistent track record that I’m aware of.

During significant earthquake events we can trust that everything important will be on their website – that their website will stand up to the traffic – and that the information is free. This isn’t the case with all our scientific departments in this country – but the GeoNet team, and the Government, has their priorities in the right order here.

The GeoNet story is one that we, as New Zealanders, should be proud of. They have forecast the aftershocks since September 4 with high accuracy. Their website stood up to unprecedented web traffic following two massive earthquakes, including our costliest and deadliest in 80 years, without overloading and crashing. All of this, from a quiet office in Lower Hutt… and from the homes of those duty officers in the middle of the night when the earth wakes them up.

– Philip Duncan, WeatherWatch.co.nz

Social Media Stats

Social Media Stats

Sara Page is the Outreach Coordinator for the GeoNet Project. Sara’s the human being behind the GeoNet Facebook and Twitter websites – and often the one that replies to your questions.

FACEBOOK

GeoNet joined Facebook in March 2010 following the Chilean tsunami. They were asking for people’s photos and videos, to better understand the effects of Tsunami in NZ from offshore quakes.. At this stage they had around 400 followers. After the Sept 4th quake it rocketed to 4000 followers.

As of today GeoNet are up to 7,760 followers and have a steady stream of comments and queries via the homepage and discussion tab. “It can get pretty full on, and at the moment its mostly myself doing it in my free time” says Sara Page.

Ms Page says the hardest thing they’ve found so-far is that Facebook and Twitter aren’t Nine to Five. “If there is a large event I’ll jump on and add comments and make sure people know we are

working on the earthquakes location etc. and keep the questions answered. We are looking into a way to better ‘man’ this resource in the future”.

TWITTER

As of today they have 5,770 followers on Twitter and they are frequently re-tweeted. All of the earthquakes posted from the website go automatically to Twitter and Facebook.

GeoNet also recently created two new Twitter accounts @geonet_above4 and @geonet_above5 as people were sick of getting all of the earthquake texts.

Comments

Before you add a new comment, take note this story was published on 22 Apr 2011.

Add new comment

Guest on 22/04/2011 4:33am

Thanks for a very good article, that is very informative.

FYI, I just spied the following link on Wikipedia:

http://en.wikipedia.org/wiki/Peak_ground_acceleration

This article explains ground acceleration in an earthquake, and how it is measured.

It is interesting to note that the 2011 Tohuku earthquake in March (M9.0) that caused the pacific-wide tsunami had a peak ground acceleration of 2.7g in a single direction, compared to 2.2g for the Chch earthquake in February (M6.3) and 1.26 for the Canterbury earthquake in September 2010 (M7.1). It is also interesting to note that other major quakes like Alaska 1964 and Chile 1960 (M9.2 and 9.5 respectively) had ground accelerations of only 0.18 and 0.3 respectively. I reckon that the technology that was around 50 years ago wasn’t capable of measuring ground acceleration like it can be measured these days. But still, the 2010 Chile earthquake (M8.8, pacific wide tsunami generated) only registered 0.78g and the 2010 Haiti earthquake (M7.0, 100,000-300,000 killed) only registered 0.5g.

To me, it seems as there is very little correlation between the Magnitude on the Richter scale and the measured ground acceleration – particularly for the ‘larger’ quakes. It seems that if a large (>~M6) quake strikes close to a major city with a peak ground acceleration of >2, there will be catastrophic damage with casualties,even if the building codes are strictly enforced. Whereas if the ground acceleration of a massive quake (>~M8) is not that high and the epicentre is more remote, the risk of casualties and catastrophic damage is greatly reduced.

This is all very interesting stuff.

Reply

WW Forecast Team on 22/04/2011 6:46pm

Excellent information and thanks for the link 🙂

Cheers

Richard @ WW

Reply